Cloudflare just dropped Cloudflare Mesh, and the internet is already buzzing with takes. Some people are saying that existing Mesh VPNs are dead. Others are wondering what this means to peer-to-peer networking. So, instead of speculating, we did what we always do when the hype gets loud: we tested it.

We set up real machines across AWS, GCP, Hetzner, and residential networks in Europe and ran benchmarks across NetBird, Tailscale, and Cloudflare Mesh. Same machines, same methodology, same conditions. Here's what we found.

TL;DR: For regional and same-country connections, p2p networks like NetBird are 2-5x faster than Cloudflare Mesh thanks to direct peer-to-peer WireGuard-based tunnels. On long international routes with poor direct peering (like Japan to Europe), Cloudflare's global backbone wins big, especially to data center endpoints. NetBird and Tailscale perform nearly identically across all tests. Your best choice depends on where your devices are and what you're connecting.

Peer-to-Peer vs Edge Routing: Why It Matters

Before we get into the numbers, you need to understand the fundamental architectural difference here because it explains basically everything you're about to see.

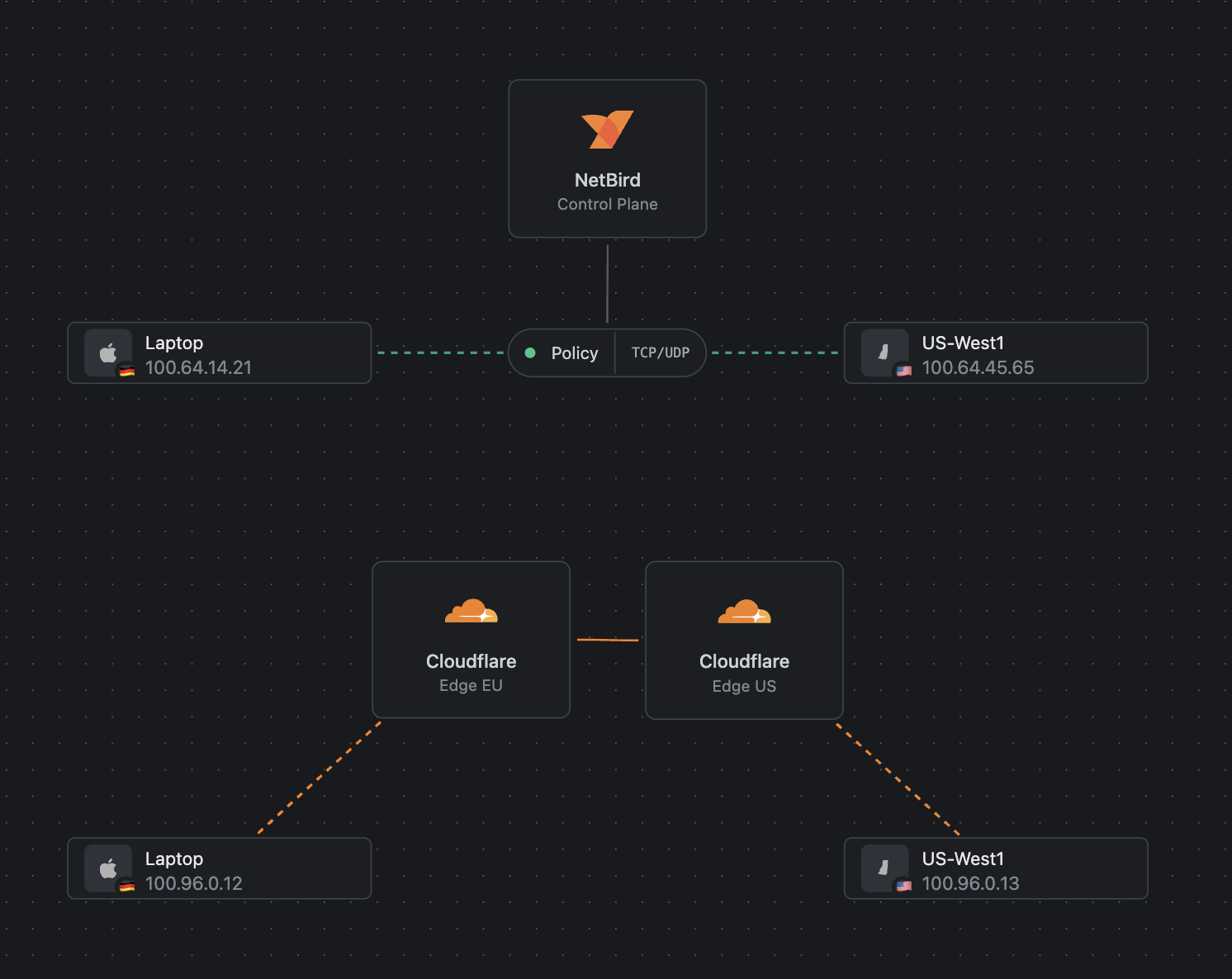

NetBird and Tailscale are peer-to-peer. When you connect two devices, they establish a direct WireGuard tunnel between them. Your traffic goes from point A to point B. Nobody in the middle sees it and touches it. It's end-to-end encrypted between your devices.

Cloudflare Mesh works differently. Every connection routes through Cloudflare's edge network. Your devices connect to the nearest Cloudflare PoP, and traffic flows through their infrastructure to reach the destination. This is important for two reasons. First, it means Cloudflare has visibility into your traffic at the edge. Second, it means every packet takes a detour through their network, even if both devices are sitting in the same data center.

Now, to give credit where it's due, Cloudflare Mesh is a significant upgrade from what they had before. Previously, their setup only supported user-to-infrastructure or unidirectional connections. Mesh unlocks true bidirectional device-to-device connectivity, which is new for them. That's a big step forward.

But the architecture still fundamentally routes everything through their edge. And that has a real impact on performance.

How We Tested

We standardized everything we could. All machines ran Ubuntu 24.04 LTS. We used for throughput testing with both upload and download () runs. On each machine, we cycled through all three networks, running the same tests on each before switching to the next.

| Software | Version |

|---|---|

| NetBird | 0.68.3 |

| Tailscale | 1.96.4 |

| Cloudflare WARP | 2026.3.846.0 |

| iperf3 | 3.16 |

| OS | Ubuntu 24.04 LTS |

Our test environments spanned a pretty wide range of real-world scenarios.

| Machine | Region | Type | Download (Mbps) | Upload (Mbps) |

|---|---|---|---|---|

| aws-east1 | US East | m5.large 2cpu/8GB | 1,910 | 2,085 |

| aws-west1 | US West | m5.large 2cpu/8GB | 2,112 | 1,834 |

| gcp-east4 | US East | n2-standard-2 2cpu/8GB | 2,859 | 2,945 |

| gcp-eu-west3 | EU West | n2-standard-2 2cpu/8GB | 3,048 | 2,831 |

| htz-falkenstein | Germany | 2cpu/4GB | 916 | 1,192 |

| htz-nuernberg | Germany | 2cpu/4GB | 916 | 1,192 |

| htz-helsinki | Finland | 2cpu/4GB | 3,553 | 3,390 |

| Residential Office | Berlin, DE | MacBook Pro | 437 | 36 |

| CEO Home | Berlin, DE | nanopi-r4s | 57 | 20 |

We documented the baseline internet speed of every machine with before running any overlay tests, so we know exactly what the raw connection can do.

A Quick Note on WireGuard Modes

This is worth understanding if you're going to run your own tests or compare our numbers to others.

WireGuard can run in two modes: kernel mode and userspace mode. Kernel mode runs WireGuard directly in the Linux kernel, which is generally considered more efficient because it avoids the overhead of copying packets between kernel space and user space. Userspace mode runs the WireGuard implementation as a regular process outside the kernel.

Tailscale uses a userspace WireGuard implementation. NetBird defaults to kernel mode on Linux, but also supports userspace mode. To keep our comparison apples-to-apples, we ran NetBird in userspace mode for these tests so both P2P solutions are operating under the same conditions.

If you want to run NetBird in userspace mode yourself, it's a single command:

Caveats

A few things to keep in mind when reading these results.

Network conditions fluctuate. We observed quite a bit of variation between runs, especially on the P2P solutions. One run NetBird would be slightly faster, next run Tailscale would pull ahead. The numbers in this article represent single test runs, not averaged results across dozens of attempts. Real-world performance will vary depending on network congestion, time of day, and routing conditions at the moment you test.

Residential connections are ISP-limited. On our home network (57 Mbps down, 20 Mbps up), there's not much any overlay can do to differentiate itself. The ISP connection is the bottleneck, not the tunnel. The interesting comparisons happen when the underlying connection has enough headroom for the overlay overhead to actually matter.

European Performance: This Is Where It Gets Interesting

If you're running infrastructure in Europe, this is the section you want to pay attention to.

Same-Country Data Center to Data Center

We tested between two Hetzner data centers in Germany (Falkenstein and Nuremberg). These are close geographically and connected by an internal backbone infrastructure . This is the kind of connection where you'd expect any overlay to perform well.

Falkenstein to Nuremberg

Same country, different data centers (Hetzner Germany)

Both P2P solutions are blowing past gigabit because the underlying connection supports it and the direct tunnel adds minimal overhead. Cloudflare Mesh is capped around 250-290 Mbps. For two machines in the same country, on the same provider, traffic is leaving Hetzner's network, routing through Cloudflare's edge, and coming back. That detour is expensive.

Cross-Country European Connections

Helsinki to Germany (Hetzner to Hetzner) tells a similar story.

Helsinki to Germany

Cross-country, Hetzner to Hetzner

P2P is roughly 2-3x faster on this route.

Cross-Provider European Connections

We also tested Hetzner Falkenstein to GCP Europe-West3, so we're crossing provider boundaries but staying in Europe.

Falkenstein to GCP EU-West3

Cross-provider, Hetzner to GCP (still in Europe)

Even crossing providers, the P2P solutions are 3-5x faster for European traffic.

The pattern here is pretty clear. For regional and local connections, especially in Europe, the edge routing model just can't compete with direct peer-to-peer tunnels. The traffic has to travel further and go through more infrastructure, and the numbers reflect that.

Where Cloudflare's Backbone Actually Wins

Now, it wouldn't be fair to just show the tests where Cloudflare struggles. There are real scenarios where their global backbone gives them an advantage, and we want to be upfront about that.

Japan to Europe

Long international routes are where Cloudflare's backbone actually earns its keep. We tested from a residential connection in Japan to two European destinations: our Berlin office (also residential) and a Hetzner data center in Nuremberg.

Japan to Berlin Office

Residential Japan to office in Berlin, Germany

On the residential-to-residential route, Cloudflare lands roughly 1.5-2x faster than the P2P solutions. Not a blowout, but a consistent lead in both directions.

Japan to Hetzner Nuremberg

Residential Japan to Hetzner DC, Germany

Swap the Berlin office for a Hetzner data center in Germany and the gap widens dramatically. Cloudflare hits 224 Mbps up and 269 Mbps down, while NetBird and Tailscale both sit in the 20-50 Mbps range. That's 5-8x faster on the same route.

Why? The direct internet path between a residential ISP in Japan and Europe is rough. There's no great direct peering for a WireGuard tunnel to take advantage of. Cloudflare's private backbone, on the other hand, has optimized routes across the Pacific and through to Europe. Their edge network is doing exactly what it's designed to do here.

Cross-Atlantic: Europe to US East

On longer routes between Europe and the US, Cloudflare also showed advantages on download speeds.

Office Berlin to AWS US East

Cross-Atlantic, residential office to cloud DC

From a data center instead of a residential office, the picture shifts slightly:

Hetzner Germany to AWS US East

Cross-Atlantic, DC to DC

The uploads on these routes were all comparable, but Cloudflare's backbone consistently provided better download throughput on cross-Atlantic connections.

US Cross-Region

For AWS East to AWS West, the P2P solutions took it back.

AWS East to AWS West

US cross-region, DC to DC

Within the US where direct peering between major cloud providers is strong, the direct tunnel approach wins again.

Residential Connections

From our Berlin office (which is on a residential-grade connection with about 50 Mbps upload and 500 Mbps download) to Hetzner Falkenstein, all three overlays were comparable on upload since the ISP uplink is the bottleneck. But download told a different story, and the P2P solutions were 2x faster.

Office Berlin to Hetzner Falkenstein

Residential office (50 Mbps up / 500 Mbps down) to DC

When your local bandwidth is the limit, you want the overlay adding as little overhead as possible. P2P does that. Edge routing effectively cuts the available download throughput roughly in half on this test.

From the home network (57 Mbps down, 20 Mbps up), all three overlays were capped by the ISP connection, so the differences were negligible.

UDP Performance: P2P vs Cloudflare Mesh

TCP numbers are useful, but they don’t tell the whole story. By design, TCP masks network issues, it retransmits lost packets, and smooths over congestion to keep data flowing. That’s great for applications, but it also hides the true quality of the underlying network path.

To get a clearer picture, we ran in UDP mode at a fixed 300 Mbps. Unlike TCP, UDP doesn’t recover from packet loss, so it shows how much traffic actually makes it through.

In a Hetzner Germany → AWS West test:

| Overlay | Sent | Received | Loss |

|---|---|---|---|

| NetBird (P2P) | 300 Mbps | 295 Mbps | 1.2% |

| Cloudflare Mesh | 300 Mbps | 257 Mbps | 14% |

The difference is clear. NetBird delivers nearly all traffic with lower loss, while Cloudflare drops a noticeable portion under the same load.

This matters most for UDP-based and latency-sensitive applications like VoIP, video calls, live-streaming, gaming, and real-time data systems. These don’t retransmit lost packets, so higher packet loss directly results in glitches, lag, and degraded quality. In these scenarios, the cleaner P2P path has a clear advantage.

NetBird vs Tailscale

I know some of you scrolled straight here, so here's the short version: they're basically the same when it comes to network performance.

Across every test we ran, NetBird and Tailscale traded back and forth within what we'd consider normal network fluctuation. One test NetBird would be a few percent faster, next test Tailscale would edge ahead. Neither solution showed a consistent, repeatable advantage over the other.

This makes sense. Both are building direct WireGuard peer-to-peer tunnels. The underlying transport mechanism is the same. The differences are in the control plane, management features, and how you administer your network, not in raw tunnel performance.

What This All Means

Here's how I'd think about it. The architecture you choose depends on what you're connecting and where.

If you're connecting infrastructure regionally (especially in Europe), peer-to-peer solutions like NetBird are significantly faster. We're talking 2-5x faster on the tests we ran. The edge routing model adds overhead that compounds on local routes where the direct path is already excellent.

If you're connecting devices across long international routes with poor direct peering, Cloudflare's backbone can genuinely help. The Japan to Berlin test proves that. Their global network provides optimized routing that a direct tunnel simply can't match on certain paths.

If you're running real-time or UDP-based workloads (VoIP, video conferencing, live streaming, gaming, RTP/SRT, or any application that can't tolerate retransmissions), the packet loss numbers matter more than raw TCP throughput. Our UDP tests showed NetBird losing around 1% at 300 Mbps while Cloudflare Mesh dropped 14% on the same path. For these use cases, a clean P2P path is a meaningful advantage.

If privacy matters to you, that's worth thinking about too. With NetBird, your traffic is encrypted end-to-end between peers. With Cloudflare Mesh, all traffic passes through their edge infrastructure. That's a design choice with implications for who has visibility into your network traffic.

If you're planning a larger deployment, keep in mind that Cloudflare Mesh currently caps at 50 mesh nodes per account. That's up from 10 with the old WARP Connector, but it's still a limit. NetBird and Tailscale don't have the same kind of hard node cap on their cloud standard plans. NetBird also has no node limits when self-hosted.

And no, Cloudflare Mesh is not the end of existing mesh VPNs. It's a different approach with different tradeoffs. For some use cases it's great. For others, direct peer-to-peer is clearly the better path.

Try NetBird

If you want to see these numbers for yourself, sign up for NetBird and run your own tests. It's open source, the free tier includes up to 5 users, and it takes a few minutes to get running. Install the client, connect your devices, and run iperf3 between them to see how direct peer-to-peer tunnels perform on your own infrastructure.